Are your websites tanking in the search engine page ranks (SERPs), and you don’t know why? If it isn’t the content itself, the problem could be how you construct your website. You see, ranking on Google depends on two things, the coding used to build your website and how Google reads it. Not all web building technology is created equally in the eyes of Google Bots.

In order for your pages to be evaluated properly by web crawlers, it has to be in their index and indexed correctly. Neglecting this important component of site evaluation can affect how your pages are read by the robotic spiders that crawl over your content.

This isn’t as much of a problem if your pages are HTML-based. Just pay attention to current SEO best practices, create killer content, and diversify your digital marketing. But, if you use JavaScript (JS) – and most developers do – it can put extra barriers in the way that will affect your page rank and ability to draw traffic.

There are ways to work around this problem, and Google is making more of an effort. But first, let’s take a deep dive into the difference between the two scripting formats and how Google Bots see them.

How Web Crawlers Work: HTML Versus JS

Website evaluation is a multi-stage process. When launching or updated a website, the search engine bots, called spiders, crawl over each page, looking for things like responsive design, freshness, links, keywords, and images. The information they obtain is then passed on to an interface called Caffeine for indexing. Spiders gather and drop information page-by-page, collecting data about your content and passing it on to Caffeine.

Once the process is completed, Google looks at the content on this index to decide how to rank the page(s). If everything on your website is in line with the current standards for SEO optimization and user experience (UX), your website will rank higher when users are looking for content.

With plain HTML, the bots are able to pass raw coding on to Caffeine to be read and evaluated. JavaScript masks the HTML, meaning there’s nothing to evaluate and index once the links and metadata are passed on.

To explain it a bit more succinctly, the less HTML and CSS Googlebot needs to parse the faster it can access and evaluate the content on your site. Overly complex redirect trains for forced SSL can also be a complication. If this is an issue you can look for hosting companies that offer free SSL and keep detailed log records to check major issues in crawl time.

Blank web pages tend not to rank well in search engines.

Browsers are able to render the JS and make the content viewable. Caffeine has evolved since its introduction in 2010 to render JS the same way a browser can. This is due to the introduction of the Google WRS (Web Rendering Service) that’s based on Chrome 41 and the newly released Chrome 69. However, the process is slow and sucks up a lot of resources.

The trick is to properly implement JS in a way that makes it renderable faster during the Google indexing process. Neglecting to do so will lead to the Hulu effect.

Optimizing JavaScript for Web Indexing

Googlebots can be denied access by using robots.txt. That means you have to take an extra step to allow access to your JavaScript-powered website by the spiders. Step two is to submit an XML sitemap with your URL through your Google Search Console.

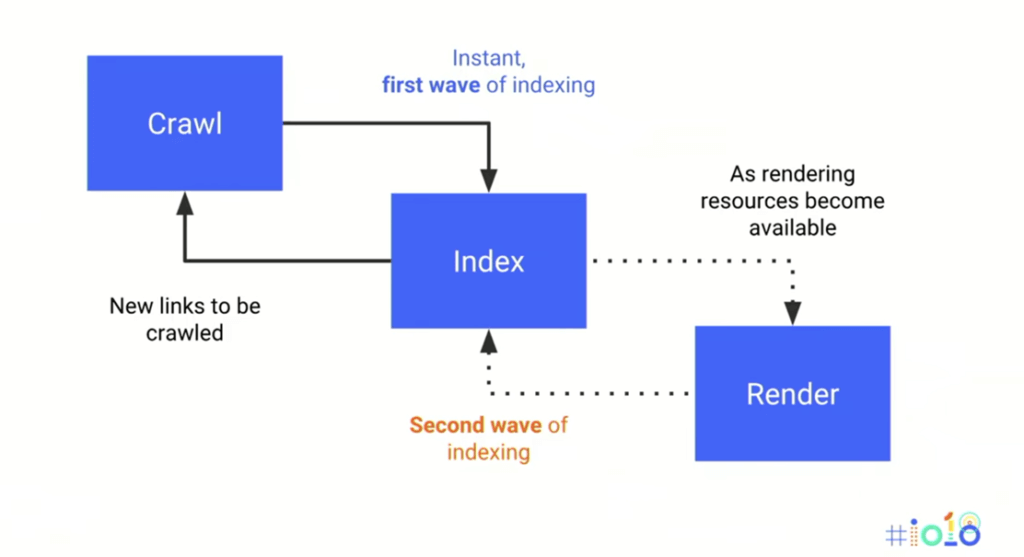

Rendering makes your website viewable in Caffeine, but the process is slow and cumbersome. The first crawled content is indexed immediately as HTML-only scripting is passed through. Then, the spiders return to crawling pages while the original material is rendered in Caffeine.

The reason this process can take a while, and become quite costly, is that low-priority pages are not indexed as quickly as those that are properly optimized for indexing. Several pages can sit in a queue waiting while bots evaluate other pages. Once there are enough resources, those pages waiting in limbo are finally evaluated and ranked.

The reason this process can take a while, and become quite costly, is that low-priority pages are not indexed as quickly as those that are properly optimized for indexing. Several pages can sit in a queue waiting while bots evaluate other pages. Once there are enough resources, those pages waiting in limbo are finally evaluated and ranked.

Indexing can be sped up by minding content quality and prioritizing the components that Google favors. These include link quality and relevancy, update frequency, traffic volume, and website speed.

One thing to keep in mind about loading time and crawling times is that JS-based websites will load a lot slower with a cheaper hosting solution. A study by Aussie Hosting, showed that cheap web hosts perform at almost 1/3 of the speed/latency as regular hosting solutions.

This is because cheap hosting offers fewer resources. When choosing a hosting company, make sure that you have enough bandwidth, processing power, and storage to handle your traffic during peak and normal operating conditions.

Your coding and images should be properly optimized by using fewer files, reducing image size, using alt-text for proper image indexing, and minifying CSS scripting and JS code. Coding should also be compressed to eliminate redundancy, white space, and unnecessary comments. Get rid of old or unused/unsupported plugins, and choose the best ISP you can find.

In addition to optimizing your website for speed, there are two tools that will allow you to view your page like Google and make sure that it’s properly rendered for indexing. The first id to install Chrome 41 on your staging device. Then, you can use the Fetch and Render URL inspection tool from the Google console or an alternative. That will tell you how well your pages are optimized for indexing and what you need to tweak.

Final Thoughts

Changes in Google algorithms can make it difficult for website owners and developers to keep up with the latest best practices for coding and design. Between mobile-first indexing and trouble with JS rendering, it’s a lot to track and implement. Using the available tools will take some of the mystery out of why you’re not ranking as well as you should. Then, you’ll have a better understanding of how to fix your page and claim your rightful place in the SERPs.

![10+ Best UI/UX Books that Every Designer Should Read [2025]](/blog/content/images/size/w960/2022/01/cover-blog.jpg)